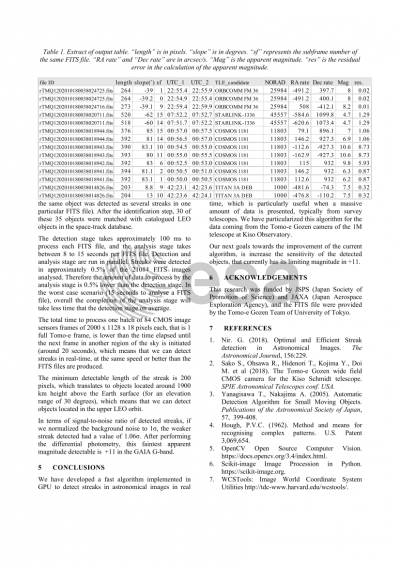

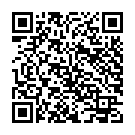

Document details

Abstract

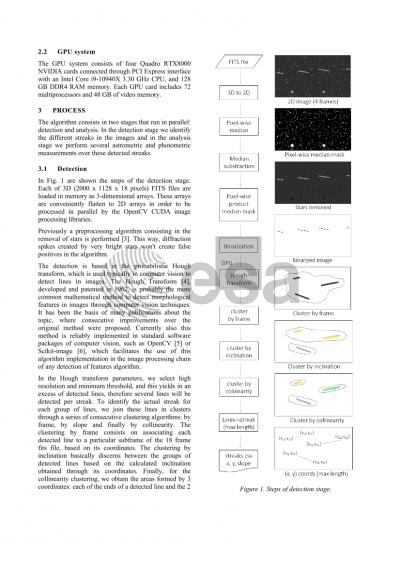

The Tomo-e camera attached to the Schmidt telescope at Kiso Observatory in Japan, includes 84 CMOS image sensors of 2000 x 1128 pixels each, covering a field of view on the sky of 9 degrees in diameter. This telescope performs several whole-sky surveys during each night, generating approximately 1.7 TB of data per hour. When surveying the sky, the telescope is in sidereal rate motion, therefore objects orbiting the Earth will show in the camera images as streaks of different lengths and brightness, mainly depending on the slant range to the object and its size.

We are developing a streaks detection algorithm that executes as minimum at the same speed as the image data is produced in form of FITS files during the sky survey. This algorithm can be useful to detect new space debris in almost real time and to obtain accurate orbital information of objects in orbit.

The algorithm consists in a modified version of the Hough transform, which is used typically in computer vision to detect lines in images. In the Hough transform parameters, we select high resolution and minimum threshold, and this yields in an excess of detected lines. Then we identify the actual streaks grouping these lines in clusters through a collinearity algorithm, and selecting the outermost extreme coordinates of each cluster of lines. This way, we maximize the number of detected streaks and the accuracy of the beginning and end coordinates of each streak. We also identify the object apparent direction, comparing streaks of the same object in consecutive frames.

Firstly the algorithm was implemented in a Python script running in a standard CPU computer, to characterize and fine tune its parameters in order to maximize its performance, through the addition of a set of synthetic streaks with different brightness and brightness variability. All streaks with an absolute brightness value of 15% or more above the background noise were detected correctly.

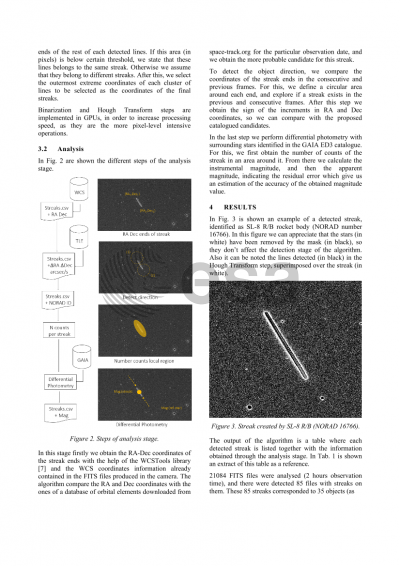

Currently the algorithm is being implemented in a GPU system consisting of two NVIDIA Quadro RTX8000 GPUs. In each FITS file of the Tomo-e camera are embedded 18 consecutive frames of 0.5 seconds exposure time, yielding in files of size 40.61 MPixels each. Each of these files are loaded as 3 dimensional arrays in the GPU memory, processed in parallel with a binarization plus an Hough Transform algorithm through a massive grid of threads, and a plain table with all detected streaks is produced and sent back to the CPU memory. Current results show an improvement of 5x in the processing speed when using the GPU system instead of the CPU in the same computer. The total time to process one batch of 84 CMOS image sensors frames of 2000 x 1128 x 18 pixels each, that is 1 full Tomo-e frame, is lower than the time elapsed until the next frame in another region of the sky is initiated (around 20 seconds), which means that we can detect streaks in real-time, at the same speed the FITS files are produced.

Preview