Document details

Abstract

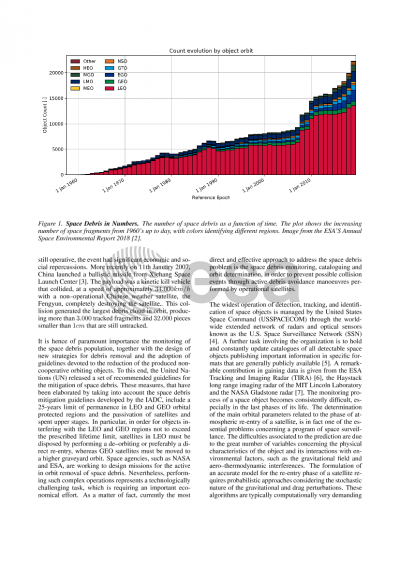

The increasing population of space debris and NEO objects is becoming a severe threat for all the on-ground and in-space infrastructure, because of the risk of potential collisions that may seriously damage these active systems. It is therefore of paramount importance to maintain updated catalogues containing estimates of the orbital parameters of objects belonging to the whole trackable population. Space Surveillance activities, such as in-orbit collision avoidance and re-entry campaigns, are typical based on publicly available orbital parameters (TLE), accessible only for catalogued and unclassified space objects. Unfortunately, TLEs are generally characterized by a few days or even faster degradation, which makes the information provided not completely reliable: object positions may be affected by errors of the order of several kilometers, mainly in the in-track direction, making the orbital prediction unreliable both at short (few hours in the specific case of objects at the end of their orbital life) and at large term (few days).

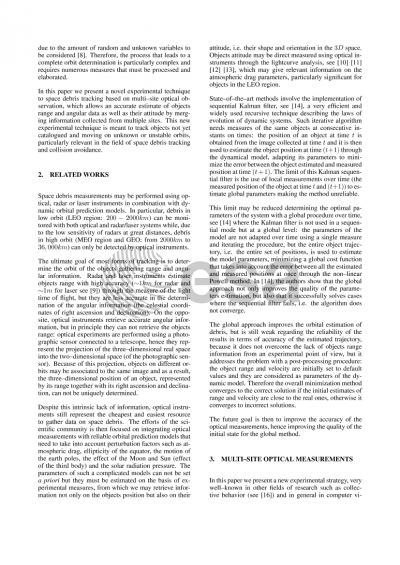

In this paper we propose a relatively cheap resource to improve the short and large term orbital prediction using multi--site optical measurements, i.e. collecting optical data of the same objects simultaneously from two or more sites. We plan to use optical measurements for joint astrometric and photometric observations: merging the astrometric information of the multiple sites we accurately retrieve the objects positions in the 3D space, while merging the photometric information we accurately retrieve the objects attitude. On one side, by reconstructing the 3D position of the objects using multi--site measurements we drastically reduce the in-track error on the orbit parameters (from kilometers to tens of meters depending on the experimental set-up) producing accurate TLEs at epoch and as a consequence improving the quality of the TLEs predictions both at short and large terms. On the other side, using objects lightcurves from different point of views, we may retrieve their attitude which much more details than when using the lightcurve from one optical measurement only and we may figure out the shape and the orientation of the object we are looking at. These two elements (accurate 3D position and attitude) are essential for the large term prevision, where a force model estimation has to be fed with information on the orientation, on the shape and on the position of the objects.

Preview