Document details

Abstract

Far less than 10% of the current, potentially dangerous space debris is trackable. This includes objects above 1 cm in size which have the potential to damage or even destroy functioning space craft and start a fragmentation cascade. Optical methods show a promising solution to this “blindness”.

The Institute of Technical Physics of the German Aerospace Centre (DLR) aims at combining a staring camera system with a ranging laser for more reliable orbit determination of space debris. The goal is to process the data from a passive optical staring camera in real-time so that laser ranging can be performed in the same overpass. This allows precise orbit estimation to reliably find the object again in future passes.

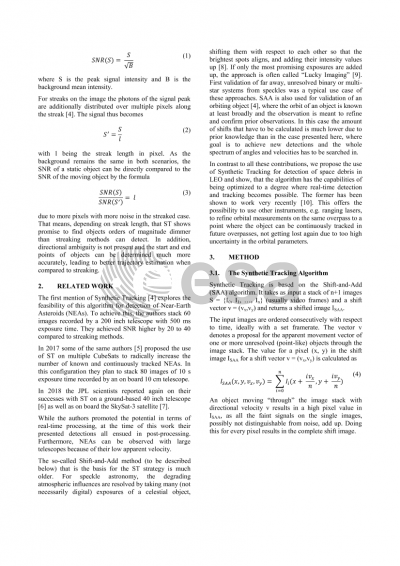

Passive optical systems are limited in their capabilities to detect and track unknown objects in Low Earth Orbit (LEO), especially if these are very dim. The established streaking methods that utilize long exposure times suffer from loss of signal due to the fact that as the object moves, the photons scattered on it are distributed over several dozens to hundreds of image pixels and form a streak. This approach results in a limited Signal to Noise ratio (SNR), which makes it hard to recover the signal from the image. Additionally, start and end points need to be determined to resolve direction ambiguity. The angular position is then measured using astrometric solving.

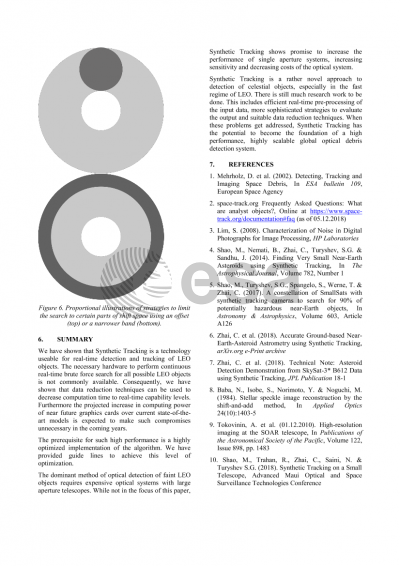

The detection and tracking of Near-Earth Asteroids (NEAs) faces the same problems. The last years have seen a rebirth of the shift-and-add algorithm labelled “synthetic tracking” in this field. In contrast to the streaking method, this approach mitigates the effect of the photon distribution over several pixels by taking many pictures with short exposure times instead, leading to the light being captured in a few to one pixel. Those images are then shifted by a certain vector and the pixel values are added. The resulting image has a signal peak when the shift vector is equal to the (reversed) apparent motion vector of the object. The exposure times used for synthetic tracking of NEAs, ranging from one to a couple of seconds, are however way too long for using the same approach in the context of LEO objects.

This work demonstrates and evaluates the implementation of real-time synthetic tracking into a passive optical staring sensor on commercial off-the-shelf (COTS) hardware that, to the authors’ knowledge, has not yet been performed. It includes high parallelization of computation on graphics processing units (GPUs), online processing of streamed image data and data reduction. The system promises to vastly increase the number of detected and tracked LEO objects due to better SNR when compared to streaking methods, as well as higher precision in the determination of the orbit due to the subsequent laser ranging measurements and vastly reduced costs when compared to state-of-the-art methods of LEO detection and tracking such as radar telescope arrays.

Preview